Before we had ray tracing

in the display of images in the game Also known as Render, the game uses a number of techniques, collectively known as rasterization , where the first step is to project or "shine" three-dimensional data. Or as we are familiar with the word "polygon" came out as an image that is in a two-dimensional plane as in this picture.

By Konrad Conrad - Own work , CC BY-SA 3.0 , Link

Then calculate that each color on the polygon must be what color. This step is called Shading . At first we didn't have any shading at all. It came out as a line called "Wireframe", then evolved to Flat Shading, then Gouraud Shading , and then cast a lot of length. If you have been following Computer Graphics for a long time, you will see that these ideas are almost all captured in the GPU. For example, Specular Hilight (1973) imagines a spoon of shiny rice. where some parts are white when exposed to light, Environmental Mapping (1976) put the surrounding shadows on the polygons. Reflection simulation, Shadow Mapping (1978) is shadows to us, Bump Mapping (1978) that makes objects appear jagged. that just did After having a Pixel Shader systemWhen DirectX9 and the latest we have used is Ambient Occlusion (1994) that makes the edge of the room or edge of the object with a dark shadow. that have only been in the game a few years ago I have parentheses of the year as well. to show that it was much longer than those ideas It can become real-time in the game.

but in the end We'll probably see it. that the games we play today no matter how beautiful it looks It still doesn't look as realistic as the scene in the movie anyway, why???

because all the techniques I've mentioned are there. It's just an "estimate" of the phenomenon of some light. because really rendering of images is to try to simulate the nature of how our eyes see light. Because light from a light source hits an object and then reflects into our eyes, as long as we can only "estimate" it, but not "simulate" (Simulate) how the light actually hits the object. The picture simply can't be "realistic", right?

So if we can make it as realistic as that. We have to simulate that phenomenon, and it's ray tracing .

Ray Tracing?

Ray Tracing is called It's the final of Real-time graphics for 2D monitors.

If you've ever used a 3D design program such as Sketchup (Lumion, TheaRender), 3D Max, Maya, Blender, they should know that. The workpieces that come out of these programs Compared to the images that come out of various Game Engine that people create games or we call "realistic" (Realistic) or "like photographs" (Photo-Realistic), are still very far apart.

Because rendering or converting 3D data from those programs into images for displaying on a 2D screen that we use today, we use Ray-tracing techniques. As the name suggests, it's Trace, a beam (Ray - it's not actually a beam). But I don't know what to mean . and then reflected on other objects, what color does it cause? and the most important thing is It made that beam of light What are the different colors? But it will work in reverse with what we think a bit, namely tracking, not caused by the source light. Rather, it is tracing from the "eye" or "camera" that is used to view 3D data, known as "eye-based ray-tracing". The view from the source light is "Light-based". too But read a little more and you'll understand why we didn't do that.)

By Henrik - Own work , CC BY-SA 4.0 , Link

Sounds like a very distant concept, my lord~ tracing the light line by line How is that possible! believe it or not this kind of concept It was first proposed in 1968 by Arthur Appel (unfortunately, Wikipedia has no information on this researcher) calling the technique "Ray Casting" and, most importantly, it was used in classic games. Many, many games, which are none other than Mr. John Carmack , the owner of id Software that gave us First Person Shooter (FPS) games to play today. who was the first to use Or is it the first one at all?

Ray Tracing's Grandfather : Ray Casting

The principle of Mr. Arthur Appel is to divide the resulting image into spaces (Pixel) and track that, in each channel, when a beam of light (Ray) is shot out, it hits any object. If it hits, it means that the viewer must see. that surface As for the color of the object, what color will it be (Shading), Mr. Appel did not specify it.

An example of a game that uses this technique is Hovertank 3D , where John Carmack spent 6 weeks building the game's engine from gif images (which I hotlinked from Wikipedia, but couldn't find the copied article. I'm really sorry) should be enough to illustrate that from the red dot representing the player When the beam of light was released The game will be able to know that Which wall blocks on the game map are visible to the player? then take this information Let's think again How to render the block (Surface) instead of having to process the data of the entire scene? It sounds rather a simple concept. Because we look at it now, if we think that it was 30 years ago when we didn't have only 3D games. It's a very new concept.

|

|

And then the real thing comes, Recursive Ray Tracing.

About 10 years later, in 1979, Mr. Turner Whitted proposed the concept of ray tracing that we use today, which is from the original ray casting, where we shot the light onto the surface and finished . Mr. Whitted said that we had to follow the light. It goes on and on too, which Mr. Whitted suggested that when light hits an object, Three new beams of light will be born.

- - Reflection : Reflection if a beam like this. We went to see that What will be reflected on the first surface we see?

- - Refraction : refracted light if a beam like this It can penetrate through objects. Follow it to see what it penetrates through the object. and then put the colors together

- - Shadow : Shadow. If a beam of light is like this, it must be followed by looking against the light source. If something comes in the way show that the point is a shadow

It's visually explained, something like this, but I don't know much about those equations, ha ha.

By Nikolaus Leopold (Mangostaniko) - Own work , CC0 , Link

And this is an example of an image ( from Wikipedia ) that uses Recursive Ray Tracing technique. You can see that it is very realistic. It reflects on the wall. And also on the vase behind it. which rendering with other techniques cannot be done. because it doesn't really follow the light

The left question is, why not follow the light from the source? That's because there's Shadow, Refraction, Reflection, that's how much light it emanates from a light source. Some of them may not reach our eyes at all. We go after it and it's a waste of processing.

But even if we try to reduce the amount of light that must be followed Needless to say, I would know To sit and follow the light one by one like this, even if you only take the visible light it took a very long time So we've never seen it used in games before. Simply put, our favorite program, Cinebench, is to test it out. Just to render 1 image, even if it's a very strong CPU, it still takes more than 30 seconds, or rendering with the test program of TheaRender, which uses both CPU/GPU to help each other, it still takes time Almost 5 minutes to render only 4 images!

oh? So how do we get it into the game?

The funny thing is that Mr. Jen-Hsun Huang (Jensen) said that it took 10 years to develop until we have real-time ray tracing in the game, but seriously, the video showing the use of ray tracing was already 10 years ago. Before it fits exactly (10 years and 3 days) is a study of Intel in bringing Ray Tracing to use in Quake Wars games, but if what I understand is that it is using Ray Tracing, both the image that we can see, or simply said Quake Wars It's a Real Ray-Tracing Renderer

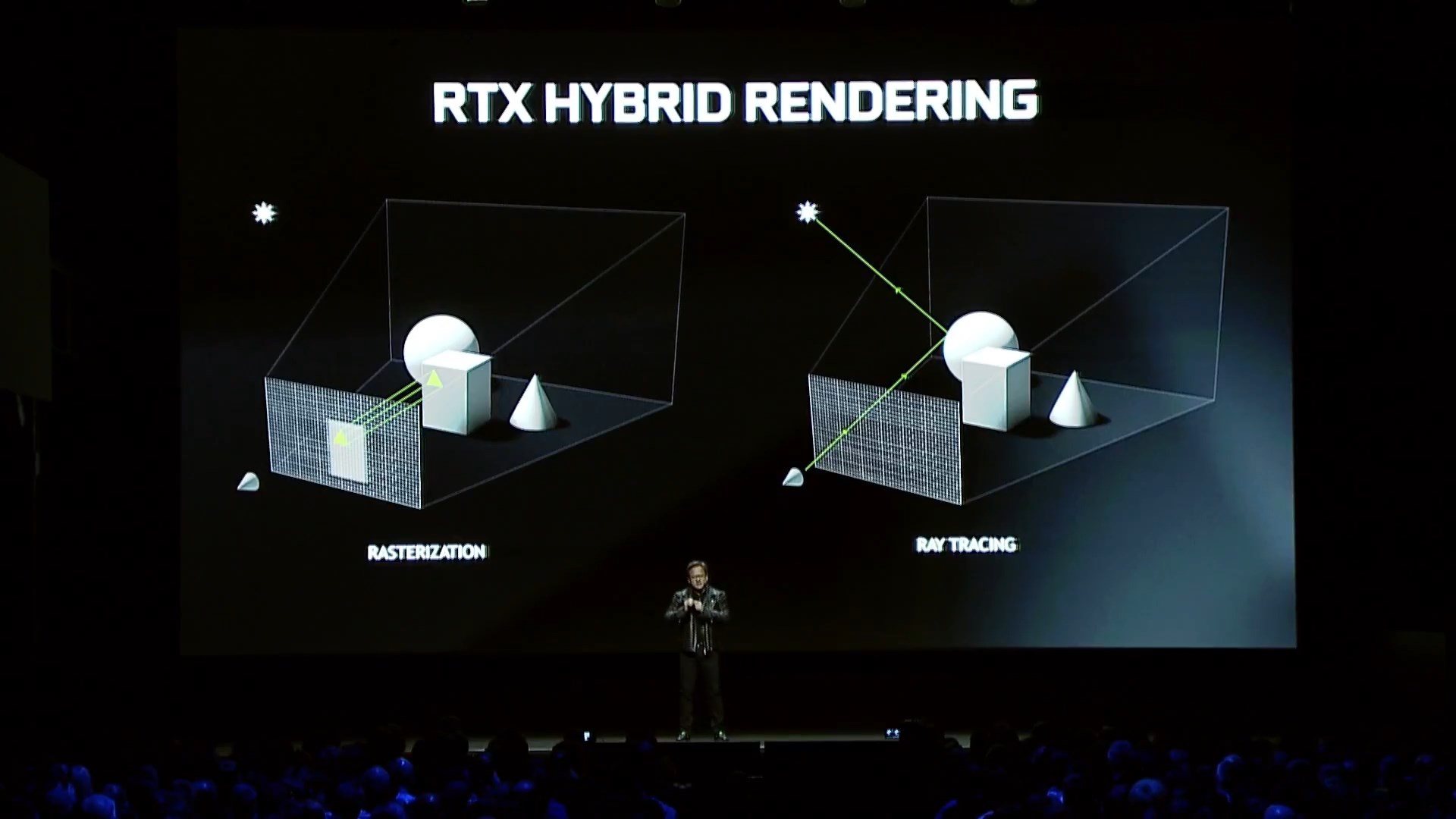

But for RTX, it is not a ray tracing of the whole image, but a Hybrid system is to take the advantages of The original rasterization, which we use as well tuned The engine is plentiful And already fast, combined with Ray Tracing is some effect. that can't be done by way Rasterization It took the ability of Ray Tracing to do it, called it Ray Traced Effects (*All images from this section, Screen Cap is from VDO).

About Ray-Traced Effects Here, Nvidia has actually released a plan for the past 5 months, which is the most hyped demo. It's probably this one. Run on Unreal Engine. Pay close attention to the shadows on Phasma's armor. It can't do this without ray tracing.

At that time, Epic said that the demo that was shown Runs at 24fps on a DGX Station (4x Volta) for the price of a Porsche ($69,000) and other demos. to watch many more Which does not say that it is running on what GPU, I gathered it this way.

Turing also comes in GTX20

And Mr. Jensen dropped a bomb last night with NVIDIA GeForce RTX ~! Which uses Turing architecture. Ao hey, we can now see Ray Tracing in home graphics cards. And what's more brutal is that 1 Turing (I don't know which one) is 20% faster than 4 Volta! So what do people who bought DGX 5 months ago do? And if compared to Pascal, Turing works better than RTX, almost 7 times!!

At the event, there were also demos from the real game. And like the person who thought of the demo, he thought that he would have to show 3 things of Ray Tracing for us to see. Come together, one game at a time.

- - Shadow of Tomb Raider shows the use of Ray Traced Shadow , which we can now fake a shadow on the ground quite a bit. And it's very beautiful, but when using Ray Tracing, you can see that the green and orange light Can mix colors as well. The shadow that we are used to seeing people sticking on the floor tilted. It is a shadow that looks very realistic, that is, the shadow at the tip of the foot takes shape. than the shadow of the upper body

- - Metro Exodus shows the use of Global Illumination (GI) with Ray Tracing , which is when light hits an object. It will reflect the surrounding objects as well, such as walls, ceilings. Previously, we used the "fake" method by putting light into the room, but now it's Global Illumination . Actually, the light from outside the window hits. with a cloth and then the fabric reflects light onto the roof occupies the wall The light doesn't reflect it, it's dark, as you can see, but really, if the game is now, I will use the technique to adjust the light later again. is that the light in the room is normal and the mirror can't see anything, it's white like when we really look in the room

-

- - Battle Field V shows the use of Reflection . We had Reflection a long time ago, but it's also a pseudo-shadow, noting that it's only available where we expect it to be. For example, in water, on a car, on some glass Because one way of making a reflection is that he takes the image to be reflected. Much of it is the sky, or the surrounding scenery, or perhaps just white light. Paste it onto the water surface or the car ( Environmental Mapping, Cube MappingThe first we can do in the game. It's exciting enough for now.) If you look closely, most of the driving games we play. There will be no reflection of the car. That is, even if the cars are running next to each other, let's say a red car. running with a white car We won't see a white car on a red car. Because it will have to render many times. is to put the camera on the red car white car render And then put the image on the red car again to get that image out, but on Battle Field V it's a reflection. from what actually happened in the game By focusing on what is obvious. and add realism like a burst of light, etc. (but if you look closely, the BF V demo doesn't have Ray Traced Shadow at all, the car looks like it's floating on the road)

-

The words I hear a lot in demos are "You Just Turn it on!" "It Just Works!" Because before showing these techniques It takes a lot of production team energy to write shaders or set parameters. To add realism, I understand that, like GI, we have to sit and watch each room. which room will put how much GI or how to show shadow have to think carefully that Where to make it shine (And then have to let the shadows that overlap It's merged into one sheet too) or a reflection. had to sit and make reflections Or find the right image for each area on the scene again. I think the real benefit of RTX is that we can have more realistic games. by the development team You don't have to sit around doing textures or writing special shaders to do any of these effects. Throw the burden on the Ray Tracing system to handle it. Just "turn on".

So which graphics cards are available?

Heh, since it's an NVIDIA RTX launch event, we might understand that. It's Nvidia's unique technology, but for game API developers, one of which is Microsoft , he hopes. Compatible with both the original Graphics and Compute Engine and released RayTracingFallback . keep it different

But it is certain that performance compared to having dedicated hardware to perform ray tracing would not be comparable. Take a peek at the Github page, the Screenshot shows the results. Get a speed of about 46 million Ray / Second only at Frame Rate 50fps (1000 / 19.6 = 51) or 42fps ( 1000 / (19.6+4.4) = 41.6), but on the screen it writes 50Hz

while the graphics card that was released last night on August 20 The speed is in the billions of Rays/sec (20 times faster).

Interestingly, from now on, the hardware used for ray tracing in GeForce RTX graphics cards is used in conjunction with various 3D rendering programs.

As Jensen said, Computer Graphics Has Been Reinvented , and from today, it must be counted as "before RTX" and "after RTX" days.

And the question that everyone has to raging Inbox is when will it be in the notebook! Now I can only say No information yet As for if you want to be the first Of the world who have tried Turing, they can go to Pre-Order together on the Nvidia web. Hope this post will have new knowledge. Give it to everyone, more or less :D