Previously, I tested using an External GPU with Shizen by connecting GTX1060 3GB to Shizen via Thunderbolt 3. Today I will try with another device. That's the NXL GTX1060 (predecessor to the current model), but it 's not messed up. Or it has to be set up like that in the cover photo. Made it (quite) pretty like this. Here's a picture of the belly of our lab rat NXL.

For those of you who are not familiar with the NXL before, it's a LEVEL51 desktop CPU-based notebook that I have reviewed. Please paste it here. Currently, this model has been upgraded to RTX (choose up to RTX2070 full body). I will write a review to read again. This model doesn't have Thunderbolt .

Don't need Thunderbolt ?

First of all, we would have to solve the doubts that we can connect to the video card. Since it doesn't have a Thunderbolt port? The reason why I can make NXL connect to another external video card. That's because it has two NVMe slots for connecting an NVMe SSD.

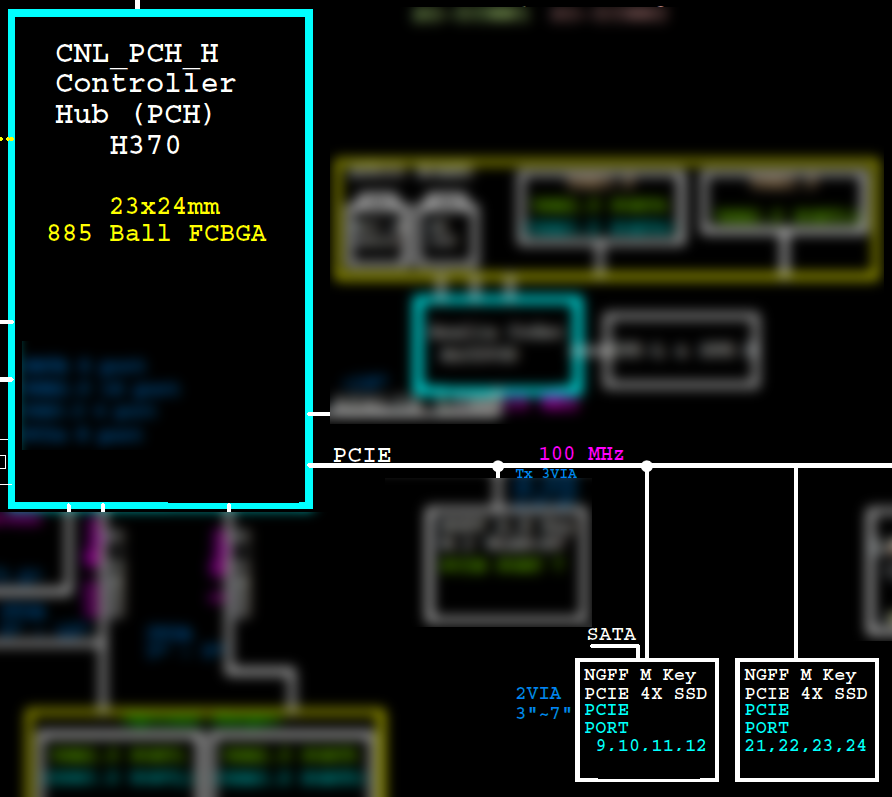

If we take a look at the NXL block diagram (N960TD / N950TP6), we can see that the two NVMe slots are actually PCI Express x4 slots. The first slot uses 9-12 PCI Express slots for speed. The other x4 is channel 21-24, but I understand that the document is probably wrong. Because the H370 chipset in the new NXL only has 20 PCIe slots.

This is because NVMe is a standard that uses PCI Express channels to allow SSDs to interface with chipsets. NVMe is designed to break through the limitations of AHCI systems . That's why M.2 NVMe SSDs have faster speeds in GB/s, higher than SATA SSDs, which tend to be around 550MB/s.

For connecting a video card through the M.2 slot occurred in the era of bit mining. This is an attempt to use the M.2 slot for increasing the number of graphics cards that can be attached. and there are small companies like us in China see sales channels as well as being in an electronics manufacturing source like China Therefore, the equipment can be made in the connection to be sold in a solid state.

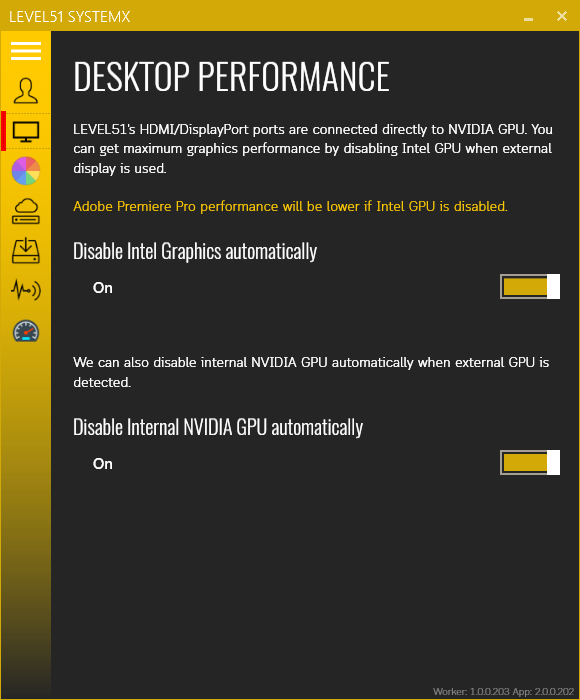

Personally, I am a naughty person who puts everything together. because they only know about the level that they can use (Has anyone done these? I'll talk to you sometimes) but I can write programs, so I made some extra software. I put the code into the SystemX program that is the program of this LEVEL51 machine. Have a video card connected via NVMe and patched so it can be activated. (Otherwise, Windows won't allow the video card attached to work.) Including being able to turn off the NVIDIA graphics card in the machine if an external graphics card is plugged in as well. because of the test use Once you have two graphics cards in your device, your favorite programs and games will be confusing. I don't know which one to use

Testing - it's from actual use.

Right now, the one I use every day is the Kurz , an all-in-one that uses our normal PC gear, but instead of putting the motherboard in. I put the device to connect the video card instead. Along with my brother's old GTX 1070 graphics card and 1 power supply.

If you're still confused about what an All-in-one is a PC, is it even possible? Let's watch the video when it's assembled :D The unit is i7-9700K (OC to 5.0GHz) and the video card is RTX2070. The Kurz screen is 144Hz 100% sRGB, the screen size is 32". It's pretty good. a lot can play games good for work

As for the real machine, it's the NXL GTX1060 (the current model is already RTX) that can be placed under between the screen base. Can be used in folding screen Because this model will suck air from under the machine already. And peripherals such as a mouse, keyboard are connected via USB Hub again, and the front ports of the Kurz except the headphone / mic jack are also connected to this USB Hub by using a converter cable again.

The reason why we don't use the internal monitor of the NXL itself is because based on the results of testing the video card connection via Thunderbolt 3 in the Shizen machine , we found that connecting the video card and displaying it back too It has a huge impact on performance. By the way, the external graphics card works best. Must use an external monitor at the same time by allowing the image to be displayed on the monitor connected to that video card. Also known as the Kurz All-in-one that has both a screen and a graphics card inside. It's a complete solution for connecting a video card, so that's it.

The specs of the NXL that I used to test are as follows:

- CPU: i7-8086K, haven't cut the shell, haven't overclocked it, and haven't even put a LIQUID Pro or IC Diamond, because I'm going to use it temporarily :) Now it's cooling through a sheet of IC Graphite, just put it down. (So hot)

- RAM: 8GB + 16GB (As they say it's a working machine, I want to try 32GB, but I don't have a budget yet, hehe)

- Primary SSD: 256GB in NVMe slot too!

- SSD Storage: Samsung 2TB per SATA slot

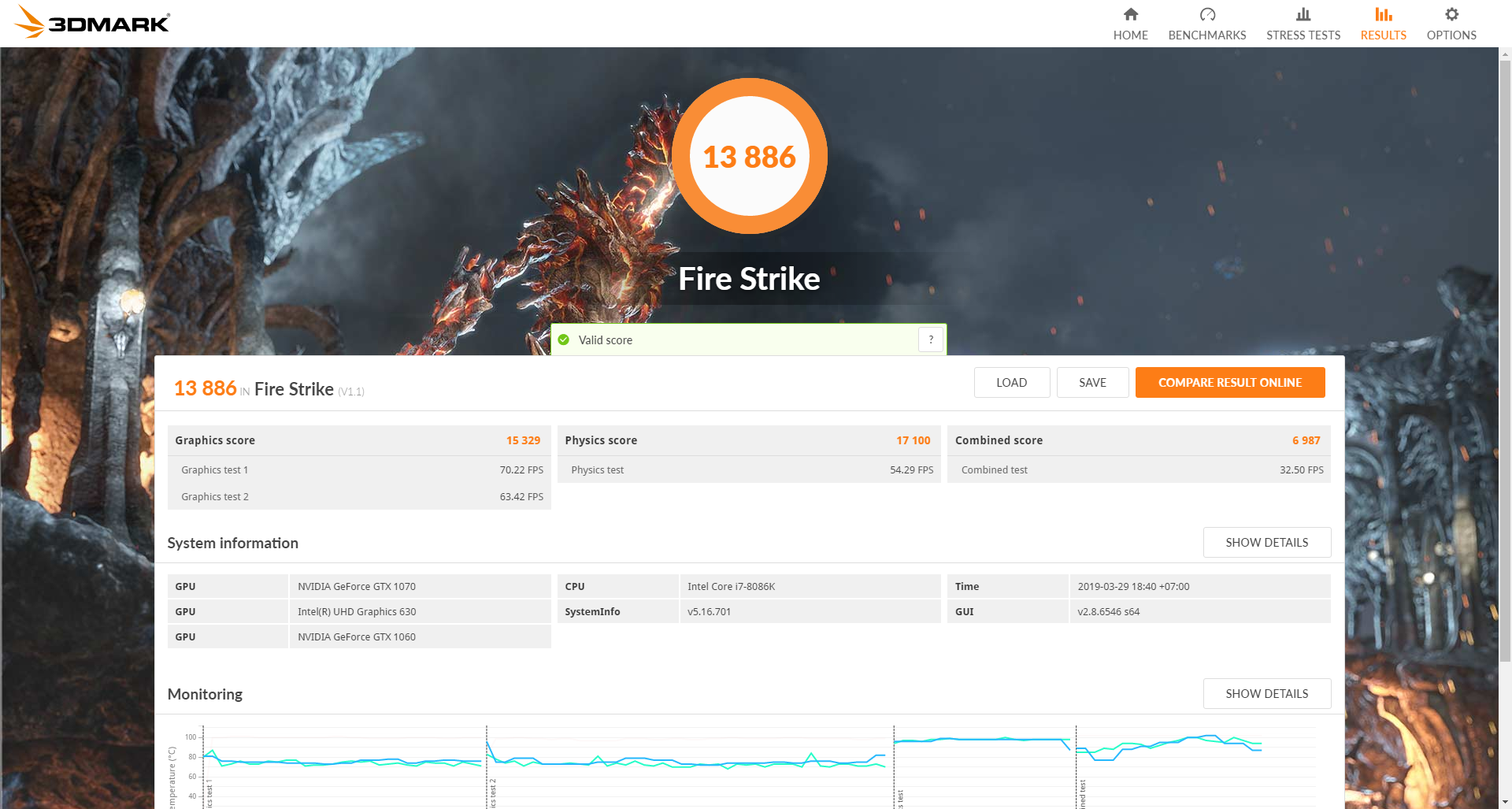

FireStrike - DirectX11

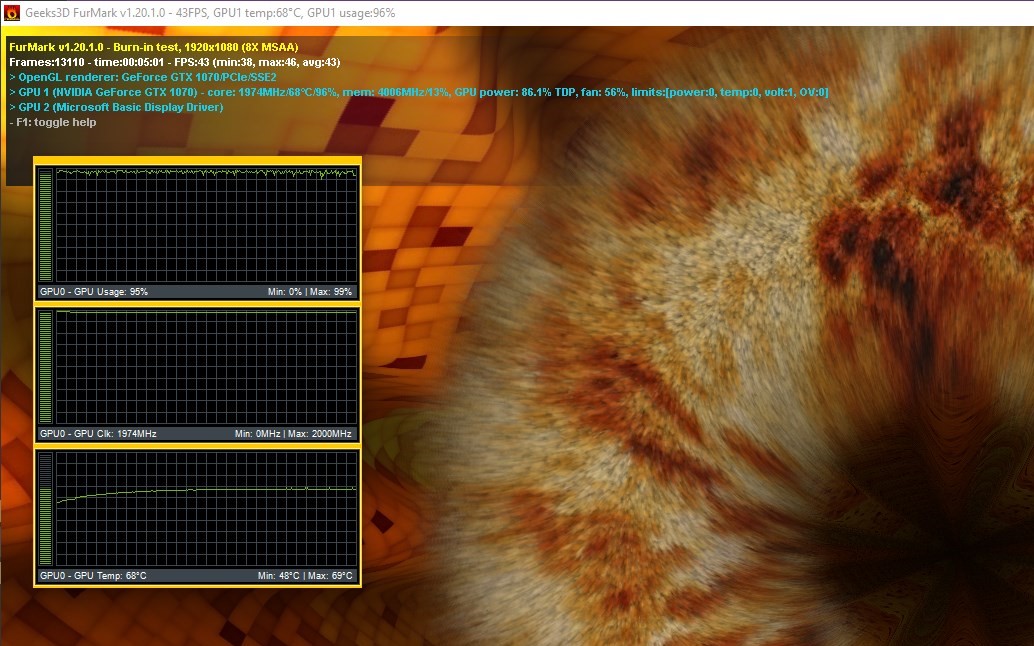

For the first test, I opted for Fire Strike, which represents the game that uses DirectX 11, with an overall score of 13,886 and a graphics score of 15,329 compared to the database of Microsoft.com. Notebookcheck The average total score from Sample 5 machines is 14,984 and Graphics Score is 18,255 and when trying to increase the core speed by 100MHz, it results in a total score increased to 14,894 and Graphics Score is 16,667 (the part that I did not compare to the base The information of the website UL, the owner of 3D Mark directly, is because most of the scores are Overclock scores, so I don't really know. What should the score be?)

The GPU Core speed of the GTX 1070 is overclocked to 1974MHz . NotebookCheck is a Founders Edition card with a speed of 1683Mhz.

It is enough to conclude that DirectX11 games have a lot of impact from the graphics card through NVMe like this. Because performance is lost 20% and even after overclocking, performance is reduced by 15%.

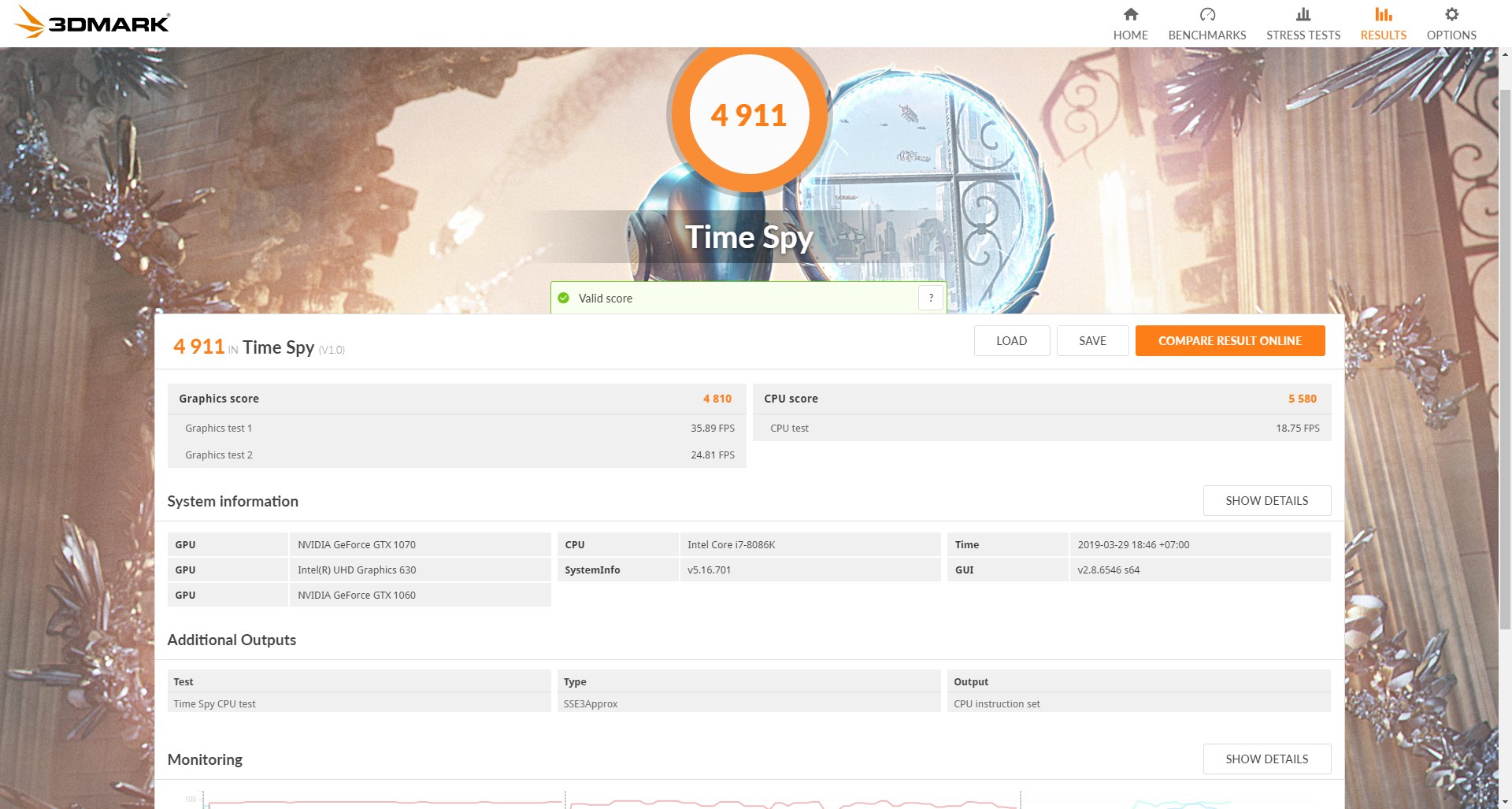

Time Spy - DirectX 12

We know from Shizen last time with API Overhead Test that DirectX 12 has less impact from Thunderbolt 3 connection than DirectX 11 this time. It was found that it received a Graphics Score of 4810 and an overall score of 4911 , with the average score of 3 Notebookcheck samples of 5896 and 5679 , respectively, or our GTX1070 connected via PCIe x4 was 15% less efficient.

But when overclocked, the score increased to 5875 and 5975 respectively, as well as the data of NotebookCheck which is enough to see the information of the tested machine It's comparable to a 1987Mhz card where our card runs 1974MHz.

It may be concluded that for DirectX 12 games, connecting the graphics card through the NVMe slot does not affect performance.

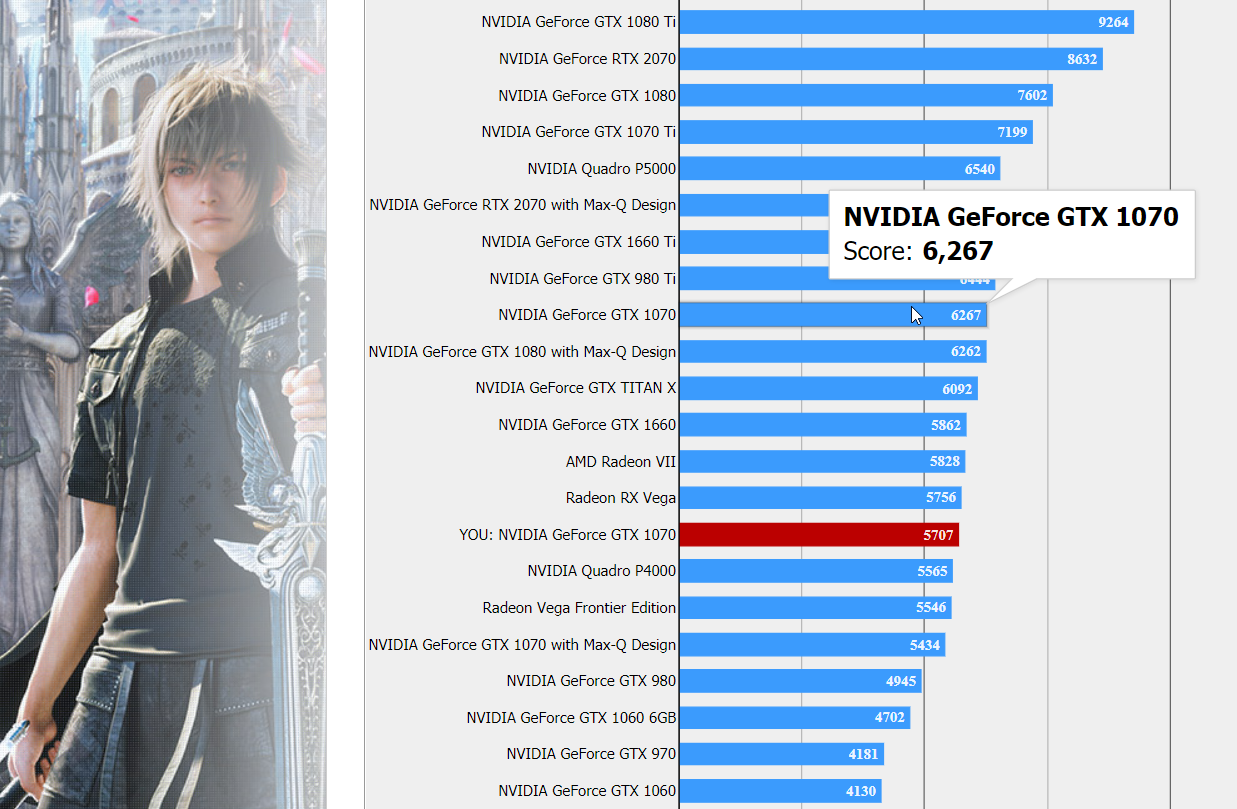

FFXV - DirectX 11

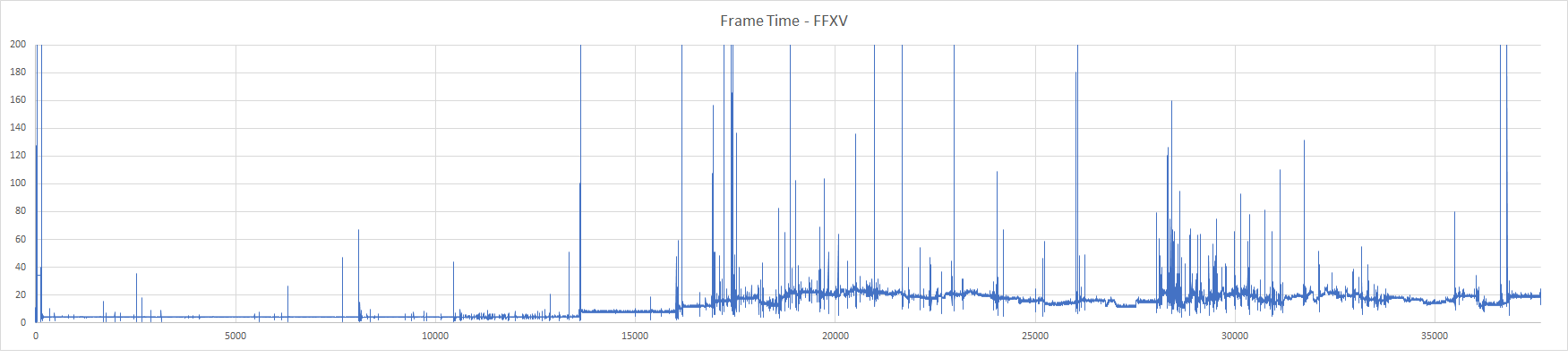

And it must end with FFXV representing Open World games that should be streaming, which is to gradually load scenes and rendering data, game files onto the graphics card almost all the time. It is likely that the bandwidth of PCIe x4 is limited the most. And understand that the game should be DirectX 11 as well, which from the results of past tests, it should be predicted that The efficiency must be greatly reduced.

But the result was not as bad as I thought. It scores 5707 points at normal speed and 5801 points when overclocked. The GTX 1070 scores in the FFXV database of 6267 points, which means our GTX1070 is plugged into an external NVMe slot. It affects about 10% efficiency.

Test Result Link:

http://benchmark.finalfantasyxv.com/result/?i=16421513925c9e1f3e3abc9&Resolution=1920x1080&Quality=High

http://benchmark.finalfantasyxv.com/result/?i=1936187515ca35fa10e3fd=High&Resolution=1920x1080&Quality

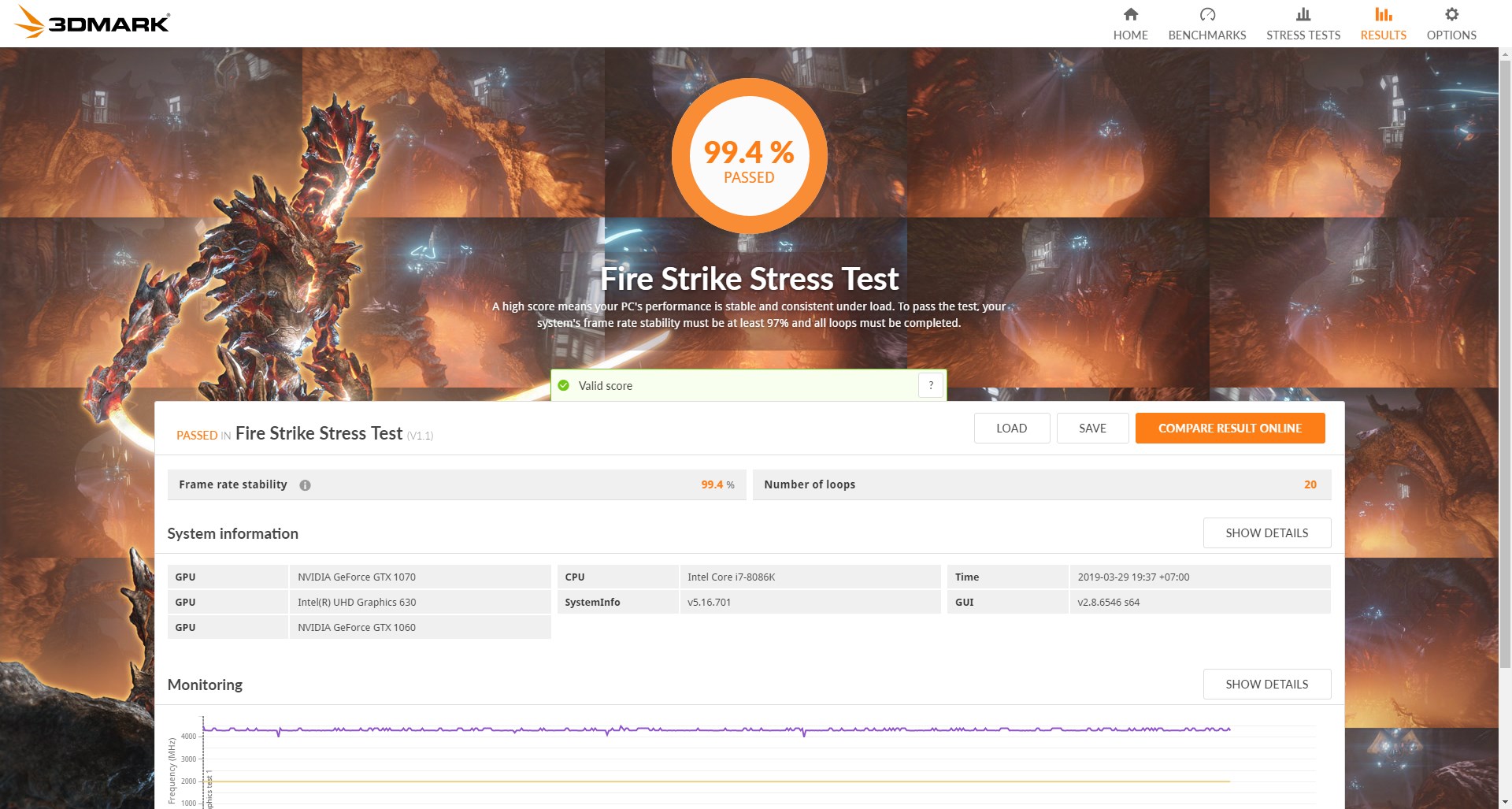

Frame Rate Stability

Another issue that I expected to be a problem. is the smoothness of Frame Rate (fps), because I have a hypothesis that If it happens that the channel is slow (x4 speed from normal graphics card to x16 slot), in order to load game data onto the graphics card. It's probably going to have to be stuck as well.

But when tested with the 3D Mark's Stress Test itself, the results were amazing, with a very stable frame rate of 99.4% .

What about real life? So it depends on Overwatch and Apex, the two games that are most likely to be affected. If there is a tripping occur.

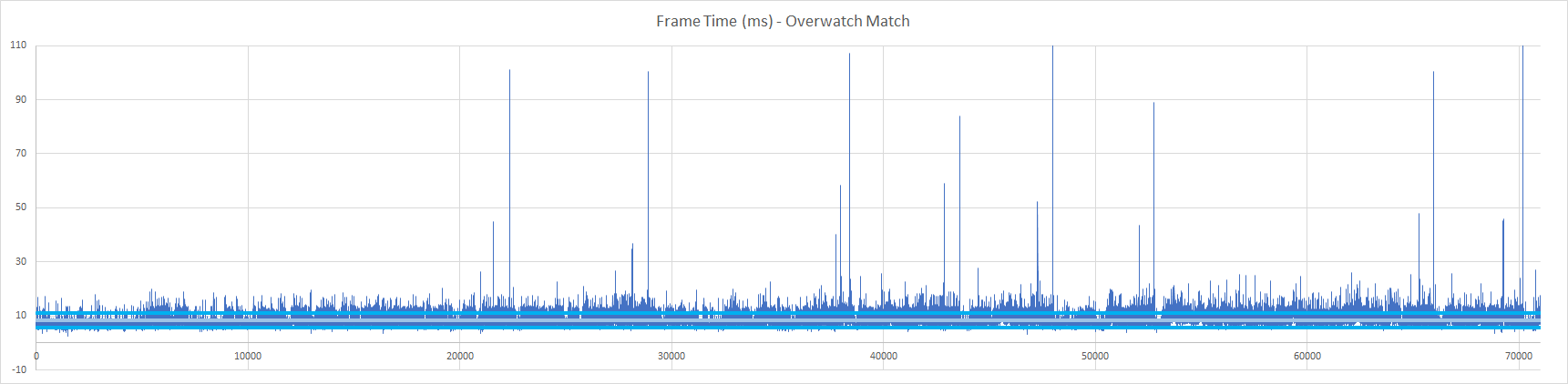

Frame Time recording (1 image or 1 frame display time) I use PresentMon . Overwatch is not expected to compete in a World Championship Plus, I like playing only Mercy, because I'm a Micro-Manage addict, playing Mercy has to manage who will live and who will die and who should get Damage Boost all the time. I was happy so I adjusted it to EPIC and set the Resolution Scale to 100. %

for recording By starting to record from the start of the match (because in the fps menu will be at 60fps) until the end of the match is that the word Defeat is shown (because this eye loses lol) by the scene played is Route 66, the average Frame Time is 8.34 ms or 119fps (1000/8.34 = 119fps) , and the fps range calculated from the standard deviation (Excel's STDEV.P function) is 91-173fps . There are some frames where the Frame Time is much higher than normal but it's just Approximately 10 frames out of a total of over 70,000 frames, and it's less than 0.1 seconds, which is very difficult to feel.

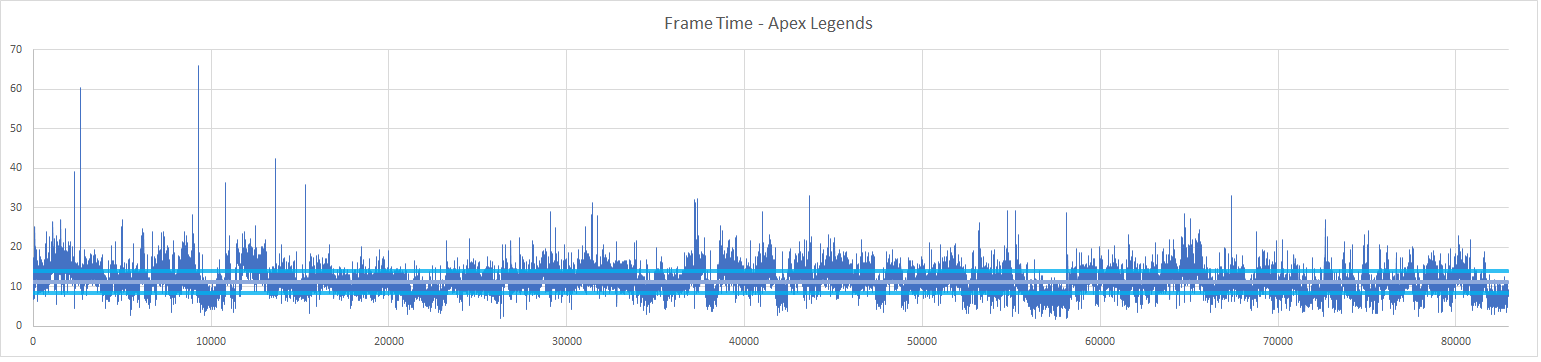

As for Apex Legends, I grabbed the data after I jumped to the ground and played until the Squad got all the players. The fps was pretty consistent too. It averaged 89fps , and the fps range by standard deviation was 71-120fps . Some frames were found at very high times. but a small number Just about 5 out of 80,000 frames and a stutter time of just 0.07 seconds. can't feel it either

Overall, connecting the graphics card through the NVMe slot doesn't put us at any disadvantage in Overwatch or Apex.

But with FFXV there is a point where the game is hypothetical. Because it is a game that has to load data into the graphics card most of the time, it is likely to be stuck at Bandwidth x4 that is not good, the stuttering moment can be felt clearly because the Frame Time is sometimes up to 700ms or the picture from the game stops almost 1 seconds (0.7 seconds), but for stats, the average fps is 80fps and the fps range by standard deviation is 70-90fps (approximately the first 15,000 frames are the loading screen).

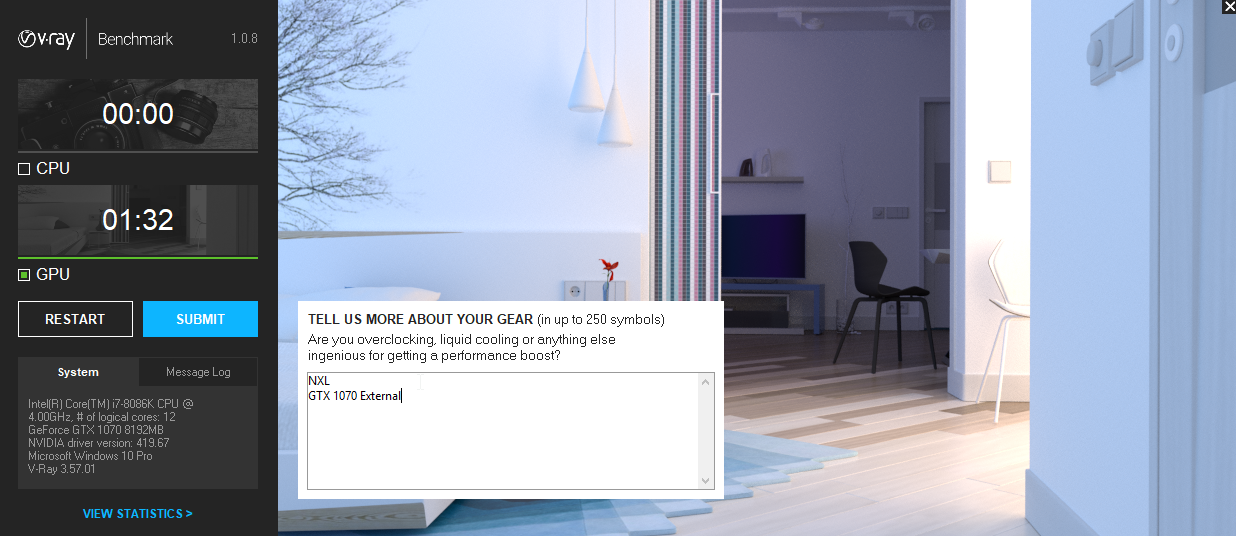

VRAY Benchmark

and if that happens We want to connect the graphics card. To render VRAY faster, not just playing games. How is its performance?

As for using only one external card, GTX1070, the output time was 1min 32sec. Compared to Chaos Group database, there is no obvious difference. And what's even more fun is to use both the GTX1060 internally with the external GTX1070 at the same time. It was found that the time was reduced to only 54 seconds , which means that it is about 48% faster.

Is it almost ready to use it or not?

For the results of this test It can be considered quite satisfactory. When connecting a video card through the M.2 PCIe slot in the NXL, this NXL is probably the most perfect to use. Because it is both a desktop CPU. There's a built-in graphics card that's fast enough as the one that's in a desktop computer. You can also connect to another one!

As for those of you who own a GTX1060 NXL model who need an external graphics card kit like this one Please be patient and wait a little longer. must be completed There are devices that are beta prototypes have come out to watch. But there was an emergency within the family during March. So I didn't come back to touch this project all month. hope after Songkran We will see more of it in shape ;)